Reporting on Social Media Platforms

By clicking on the small arrow at the right of each line, you will discover the social media reporting procedures that interest you.

HATE SPEECH

HATE SPEECH

Facebook reporting page about hate speech

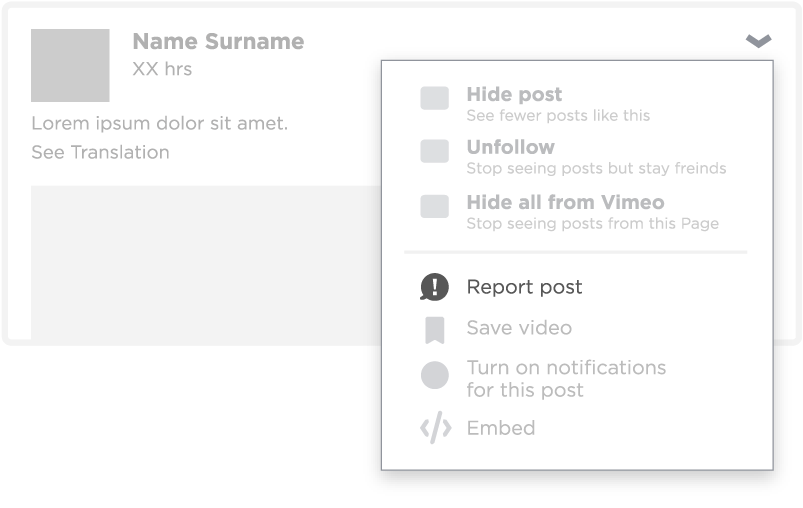

In nearly all cases there is a report function next to the post, page or message in the drop down menu which looks like this

Example:

If you do not have a Facebook account you can report using the online form.

All reports are reviewed and a response is provided by Facebook. In cases of severe and threatening hate speech or bullying you are advised also to report it to the relevant national bodies.

CYBER BULLYING

CYBER BULLYING

Facebook page about event of cyber bullying

In addition, the Bullying Prevention Hub provides tips and resources for teenagers, parents and educators. This is a joint initiative with partners working on bullying. It reaches out to both victims and witnesses who want to help.

COMMUNITY STANDARDS

COMMUNITY STANDARDS

Facebook community standards page

The community standards describe the grounds on which Facebook may decide to take down content that has been reported. They include:

- Direct threats of harm to public and personal safety

- Bullying and harassment, which is content that appears to purposefully target private individuals with the intention of degrading or shaming them

- Sexual violence of adults and exploitation of children, which is content that threatens or promotes sexual violence or exploitation, including that of minors.

HATE SPEECH

HATE SPEECH

Twitter reporting page about hate speech

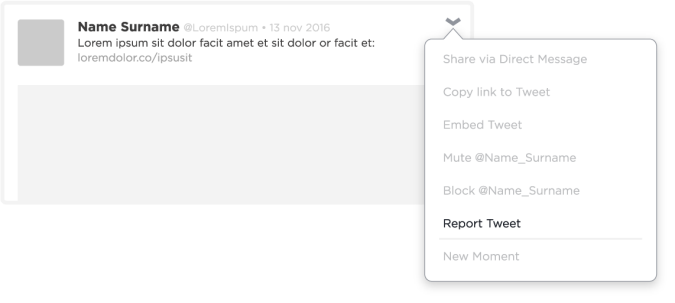

Most often the report function is available in the tweet, profile of media content under the dropdown menu with more options pictured as

Example:

All reports are reviewed and a response is provided by Twitter. In cases of severe and threatening hate speech or bullying you are advised to also report it to the relevant national bodies.

CYBER BULLYING

CYBER BULLYING

There are no specific guidelines provided in cases of bullying. Twitter does offer tips to promote safe use of its services, which cover (prevention of) bullying for teenagers, families, parents and educators.

COMMUNITY STANDARDS

COMMUNITY STANDARDS

Twitter community standards page

Specific policy for dealing with content promoting child sexual exploitation

The Twitter rules provide the ground for taking down content or suspending accounts. This includes abusive behaviour, such as:

- Violent threats (direct or indirect)

- Harassment, which entails inciting or engaging in the targeted abuse or harassment of others

- Hateful conduct, which entails promoting violence against or directly attacking or threatening other people on the basis of race, ethnicity, national origin, sexual orientation, gender, gender identity, religious affiliation, age, disability or disease.

HATE SPEECH

HATE SPEECH

Reporting on Instagram depends on the platform you use:

Web browser (pc/laptop)

App (Application on phone/tablet)

In most cases the report function can be found in the drop down menu with more options, recognisable as

Example:

If you do not have an Instagram account you can report using the online form.

All reports are reviewed and a response is provided by Instagram. In cases of severe and threatening hate speech or bullying you are advised to also report it to the relevant national bodies.

CYBER BULLYING and SAFETY ONLINE

CYBER BULLYING and SAFETY ONLINE

online form to report harassment or cyber bullying

Instagram provides tips on the safe use of its services online, including when supporting friends and for parents.

COMMUNITY STANDARDS

COMMUNITY STANDARDS

The Instagram community guidelines describe how the company deals with violations of its terms of use. The guidelines call on users to:

- Avoid violating copyrights

- Follow national law

- Show respect for other members of the community or users.

Snapchat

HATE SPEECH, BULLYING AND HARASSMENT

HATE SPEECH, BULLYING AND HARASSMENT

online questionnaire to help users find answers to different types of safety concerns, including experiences of hate speech, bullying and harassment.

In most cases Snapchat recommends:

- Blocking the attacker

- Reviewing your privacy settings

- Reporting the incident to the national law enforcement agencies.

Example:

SAFETY ONLINE and COMMUNITY STANDARDS

SAFETY ONLINE and COMMUNITY STANDARDS

The Snapchat safety centre offers safety tips for users and guidelines for parents and educators.

The community guidelines describe behaviour expected of users and call for:

- No nudity or sexual content

- Respect for privacy

- No threats

- No harassment, bullying or spamming.

VKontakte (VK)

In its terms of use, Vkontakte (VK) gives no explanation on how it deals with hate speech or cyber bullying, nor on how to report it.

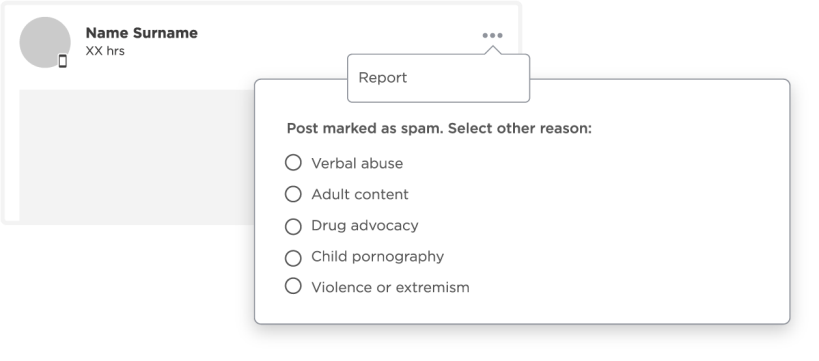

Users can report hate speech and cyber bullying directly to VK. The report function looks like the example below and can be found next to the post in the right upper-hand corner. After pressing the button the post becomes marked as spam and there is an option for further specifications as to why you consider it to be spam, including:

- abuse

- material for adults only

- drug advocacy

- child pornography

- violence/extremism.

Example:

Depending on the post’s content, (abuse) and (violence/extremism) might be the most relevant possibilities to report hate speech and cyber bullying.

It is possible to report a "community" of users to the VK support service team. Your report should explain how your personal interests are harmed and provide direct links to prove your point.

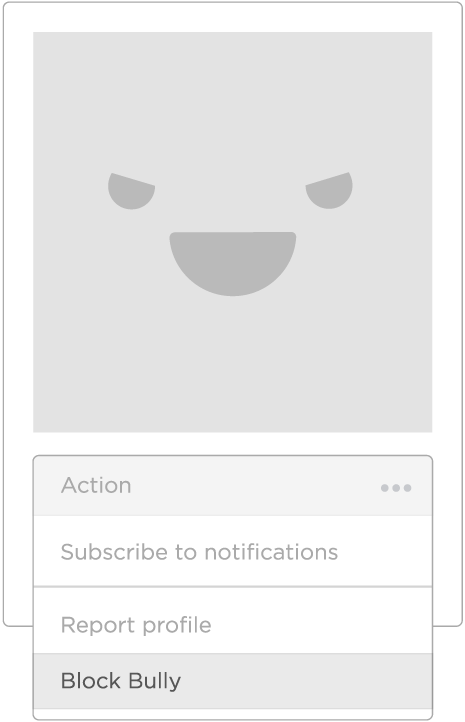

CYBER BULLYING

CYBER BULLYING

To protect yourself against cyber bullying, VK recommends adding the bully to your blacklist. The blacklisted accounts can no longer befriend you, write messages to you or connect to your activities on VK.

Example:

COMMUNITY STANDARDS

COMMUNITY STANDARDS

The terms of use state that the site administration can, at its own discretion, delete any content or account (Article 7.2.2). Users are prohibited (Article 6.3.4) from uploading, saving, publishing, sharing or using in any other way information that:

- contains threats, discredits, offends, or denigrates the honour, dignity or business reputation of a person or infringes the inviolability of the personal life of other users or a third party

- promotes and/or supports racial, religious and/or ethnic hatred, promotes fascism or racial superiority

- contains extremist materials.